WWDC 2019 favorites

Here are the 5 things from WWDC that impressed me the most

2019-06-05

This last week was WWDC. A conference for software developers building on the range of Apple's platforms. I have been watching WWDC for several years now, and this one did not disappoint. Here are my 5 favorite announcements from WWDC 2019

SwiftUI

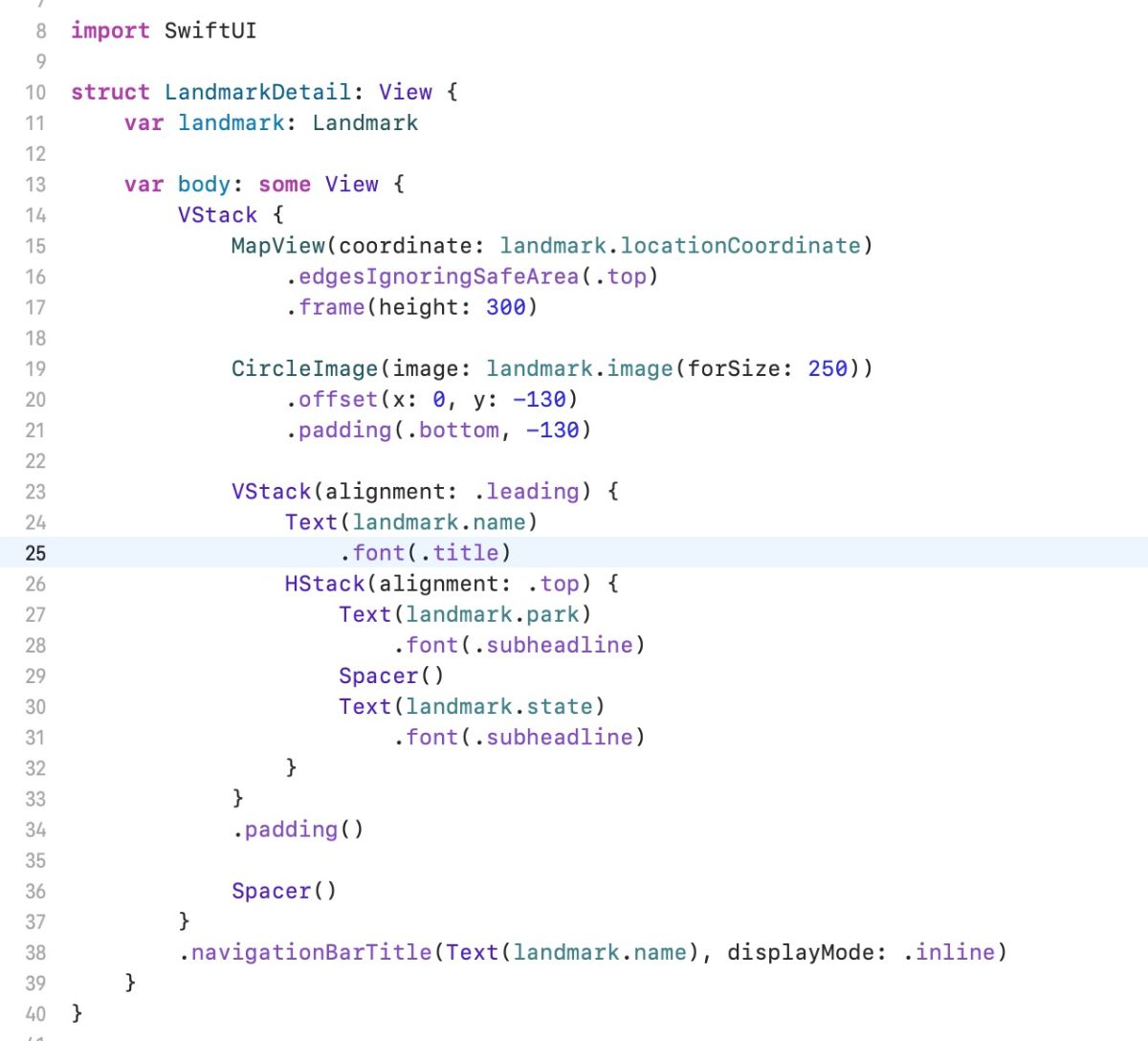

The first has to be SwiftUI. Coming from a web background, doing React on the front end, I've grown accustomed to the power and ease of understanding for declarative UI libraries. Your code looks and accurately represents what your UI will end up looking like. This makes it easier to reason about your UI and is a huge improvement over the storyboard/auto-layout juggle you used to have to do.

What this also enables is a straightforward method for declaring reusable UI components within your swift project. This was possible before with XIBs and a corresponding swift file, but it's not immediately clear how to associate a XIB with a view, or how to instantiate a view that is related to a XIB. And good luck getting your custom XIB view to show up in a storyboard. With SwiftUI, all your code is conveniently located in one file, and it's easy to reuse your components in other SwiftUI code.

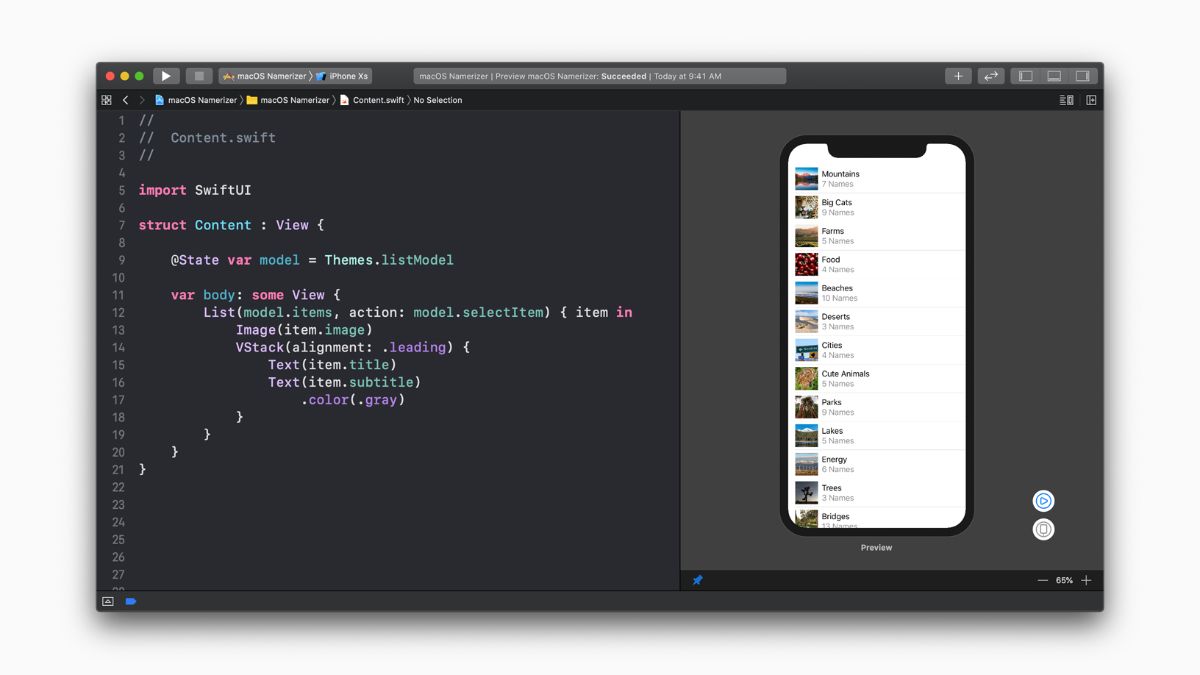

In addition to SwiftUI, Xcode got a big update in order to support SwiftUI, and improve the developer experience. For a long time, my gripe with native development is the slow feedback loop. Change a file, rebuild the app, wait for a few minutes, see your change. It makes developing user interfaces very slow, especially when you are just making small tweaks. With SwiftUI and the latest updates to XCode, you can now have a live preview of your SwiftUI code in a preview inside XCode. This drastically increases the speed at which you can see your changes to know if they are correct. There are also convenient popups and tools to help write your code for you. You can right-click on a view in the preview, and you get a contextualized settings popup where you can edit properties, and XCode will insert the code for you. This especially helps in cases where you might not know what modifier function you must call to achieve a certain outcome.

Arguably the best part of the live preview though is the fact that you can have more than one of them at the same time. The previews are powered by some DEBUG mode only code, which is auto-created when you create a SwiftUI file, that enables you to provide test data in your preview. But the real power comes from the fact that you can render multiple previews at once, in different ways.

For example, you could have one preview that is a happy path preview, one that is in dark mode, one that is in right to left mode, one with dynamic text sizing. If you are building a screen you can even have previews of different devices, one iPhone 8, one iPhone XS, one iPad. This is very powerful as you can immediately get feedback on multiple cases for a single UI. Before this feature, you would have to run your app and then adjust the simulator or your device to the mode you wish to test and then explore your app.

One other aspect of SwiftUI that is nice to have, the Text view by default will extract for localization any static strings provided it. In most frameworks, when you want to indicate that a string needs to be localized it had to be wrapped or formatted in a specific way so that the extraction process can determine which strings must be internationalized. But with SwiftUI, if you provide a static string, Text("Translate Me"), or even a string with interpolation, Text("Translate \(who)") it will know that string is able to be translated and replaced with a string from a different locale.

Sign In With Apple

In a time where everyone is tracking you, wanting to know everything there is to know about you, Apple is the lone tech behemoth that has gone to great lengths to ensure their customer’s privacy. Sign In With Apple is the next step on that front. I'm sure you have seen on various websites, the ability to sign in with Facebook or Google. What you may not know is that when you use this convenience, Facebook and Google may be sharing information about you to that website or app that you did not know about. Not only that but Facebook and Google collect information about what websites and apps you use their service to sign in with. All in an effort to try and understand you, know who you are, and as a result push ads that they think you will want to click on.

With Sign In With Apple, that privacy issue is no longer a problem. You can now Sign in to an app or website without the worry of Apple passing along any extra information that you don't want. They even take it a step further by providing the ability to not even share your real email address with the app. Instead, Apple will generate a random email address that they provide the app. If the app sends an email to this generated email address, they will forward it to you.

Random email generation with forwarding to your real email was an idea I explored a year or so ago, but ended up abandoning because the logistics around how a user would use this service seemed a bit much. But integration right into a sign-in method makes this a seamless tool that a user doesn't even have to think about. I do wish Apple would take this one step further, in a way that I had envisioned a service like this to operate. I'm sure that Apple is associating what emails are being provided to what app, and as such could enforce that one of their generated emails can only be sent to by the app it was provided to. This way if an app were to give out your email to a 3rd party you did not authorize, Apple could let you know.

ARKit People Occlusion

I love 3D. Animation, game development, are all things I wish I knew how to do better. So when Apple announced ARKit a while back, it was a big deal for me. The People Occlusion announcement was yet another step in making AR immersive and practical for use. The feature was demoed by a new Minecraft app, where the two demoers were embedded inside of a Minecraft world, participating in a shared multiplayer experience. While it was not absolutely seamless, it is none the less a great step that I can only imagine will continue to improve.

ARKit Motion Capture

When you hear about actors who play characters in movies that are obviously CGI, their performance of the character is recorded using motion capture. This process usually involves the actor donning a suit with a bunch of reflective balls, surrounded by many infrared cameras that capture the movement of these reflective dots and then using software, they match up each ball from each video feed and then can determine it's movement through 3d space. The Xbox Kinect did a similar thing but instead of having many cameras, it had 2 and it projected infrared light into the room and looked for the reflections of its own projected light. So when Apple announced that the latest version of ARKit could do this whole process, with a single camera, no need to wear a suit or any need for infrared, it made a great impression on me.

Now the demo is still a bit shaky and is by no means at the level of what the Kinect could do and nowhere near what actual motion capture can do, it is still impressive none the less. They are doing depth measurements with a single camera as well as tracking multiple points on the human body in real-time is incredibly impressive, and I'm sure will only get better.

Catalyst

For years Google has pushed the ability to run Android apps on Chromebooks, trying to increase the number of available apps on their Chromebooks. I'm not sure if the Mac suffers from a similar problem, as there are a lot of apps on the Mac App Store. Granted many of those apps are virus detection and storage optimizers. But Apple wants more apps on Mac, and a great place to get them from is from the iPad. So Catalyst is a means by which developers can port their apps from iPad to Mac. Apple claims that this is a one-click adjustment, which may be true for a minimalistic integration, but later in WWDC, they talked more about how to make it better on each platform, instead of just having an iPad app on Mac.

The thing that will really make Catalyst successful will be SwiftUI. SwiftUI works across all Apple platforms and will render platform-specific controls and views on each platform. So a picker on iOS will render as a wheel, whereas on Mac it will render a dropdown selector.

Conclusion

Regardless of what Apple announced, the one outcome of WWDC is that I feel empowered to build more things. Apple has a knack for helping people see new ways to solve problems. But you hear the announcements and can't help but wonder how you can utilize these new features.