Paginating batchWriteItem and batchGetItem in DynamoDB

DynamoDB is a fully managed NoSQL document database provided by AWS

2020-12-01

Two methods in DynamoDB allow us to fetch or write many items at once, batchGetItem and batchWriteItem. They allow us to fetch or write many items, across multiple tables, in a single call. But there are a few caveats we must take into consideration for each method, to ensure we operate on all items.

batchGetItem and batchWriteItem

There are two things to keep in mind when using batchGetItem and batchWriteItem.

- They both have a max number of items that can be fetched or written in a single operation.

- They both can only get or write up to 16MB of records at a time.

In the case of batchGetItem, if you try to fetch more than 100 items in a single operation, it will throw an error. And in the case of batchWriteItem if you try to write or delete more than 25 items in a single operation, it will throw an error. If you have more than 100 or 25 items respectively, it is important to fetch/write all the items in batches.

If you happen to be fetching or writing items that are very large, it is also possible to exceed the 16MB response/request size limit. In this case, the response of the call to batchWriteItem and batchGetItem will include an array of items that have yet to be written, or fetched. You can then call batchGetItem or batchWriteItem with these unprocessed items to fetch the remainder, and continue until you have fetched all of them.

UnprocessedKeys and UnprocessedItems

Let’s say for example that you have a batch of items, less than 100, that you want to fetch, but the response exceeds 16MB.

const keys = [{ id: ‘1’ }, { id: ‘2’ }, …];

const response = await dynamodbClient.batchGetItem({

RequestItems: {

myTable: {

keys,

}

}

}).promise();

Included in the response will be a property called UnprocessedKeys, which has all of the keys for each table that were not fetched because it would exceed the 16MB limit. In order to use these keys to fetch the remaining results, we can pass them in as the RequestItems to the next call to batchGetItem.

const results = response.Responses.myTable;

const nextResponse = await dynamodbClient.batchGetItem({

RequestItems: response.UnprocessedKeys

}).promise();

results.push(...nextResponse.Responses.myTable);

The idea is essentially the same with batchWriteItem, only the property is called UnprocessedItems, which can likewise be passed directly into the next batchWriteItem as RequestItems.

Fetch/Write all at once

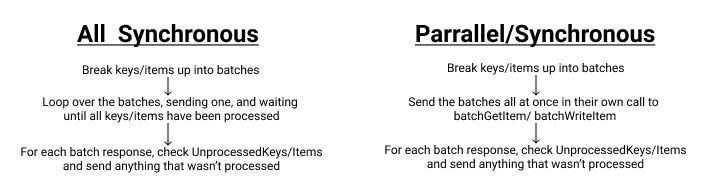

Because of the need to perform batchGetItem and batchWriteItem in batches of 100 and 25 respectively, we have 2 options for operating on all items all at once. We can either do things in an entirely synchronous way or in a hybrid parallel-synchronous way.

Creating Batches

First of all, we should define a function to create the batches.

function batch(array, size) {

const copy = array.slice();

const batches = [];

do {

batches.push(copy.splice(0, size));

} while (copy.length > 0);

return batches;

}

Synchronous

The synchronous approach will do one batch at a time, waiting for all UnprocessedKeys to be done before moving on to the next batch.

const batches = batch(itemsToGet, 100);

const results = [];

// loop over each batch of keys

for (const keysBatch of batches) {

// make a mutable copy of the keys in the batch

let keys = keysBatch;

// perform the operation in a loop until all keys

// in the batch have been operated on

do {

const response = await dynamoClient.batchGetItem({

RequestItems: {

myTable: { keys },

},

}).promise();

results.push(...response.ResponseItems.myTable);

// if there are UnprocessedKeys, capture them into keys

// to be operated on in the next loop

keys = response.UnprocessedKeys?.myTable ?? [];

} while (keys.length > 0);

}

Parallel/Synchronous

The parallel/synchronous approach will start all batches at the same time, and let them all complete all the UnprocessedItems for each batch synchronously.

const batches = batch(itemsToWrite, 25);

// start each batch at the same time and then wait for all of them to be done

await Promise.all(

batches.map(async (batch) => {

// make a mutable copy of the keyds in the batch

let items = batch;

do {

const response = await dynamoClient.batchWriteItem({

RequestItems: {

myTable: items

}

}).promise();

// if there are UnprocessedItems, capture them into itmes

// to be operated on in the next loop

items = response.UnprocessedItems?.myTable ?? [];

} while(items.length > 0);

});

);

Exceeding Throughput

One thing to note for the parallel/synchronous approach. If you are writing or reading a lot of items, you have the potential of very quickly exceeding your throughput capacity using this method. So be careful with how you use this one or enable on demand billing for your DynamoDB table.

Paginating query and scan in DynamoDB

This post is one part of 2 blog posts all about paginating results in DynamoDB. To see how to paginate results for the query and scan operations, click here